Intelligence Crisis or Explosion of Opportunities?

A Response to the Citrini Report on AI and Economic Disruption By Juan Ramón Rallo | Quality Value Research

Last Sunday, an article published by Citrini—one of the most recognized research firms in the sector—generated an intense debate on social media and in financial outlets. Bloomberg went so far as to identify it as one of the catalysts for the correction that software companies suffered on Monday the 23rd.

The report outlines a scenario toward 2028 in which artificial intelligence, driven by exponential productivity growth, triggers a disruption of such magnitude that it would ultimately erode the real economy and generate deep structural imbalances.

It is an ambitious, elaborated, and well-documented analysis. However, in our view, it contains debatable economic assumptions and omits certain adjustment mechanisms that have historically operated in processes of technological transformation.

To address this question with the rigor it deserves, today we have the participation of one of the most influential economists in the field of macroeconomic analysis in the Spanish-speaking world: Juan Ramón Rallo.

Rallo holds a PhD in Economics, is a university professor, and is one of the leading contemporary exponents of liberal thought and the Austrian school. His work combines theoretical depth, historical analysis, and an ability to communicate complex ideas, making him an essential reference when it comes to interpreting major structural transformations like the one we are experiencing.

Furthermore, we are pleased to announce that this collaboration will not be a one-time event. Juan Ramón Rallo joins as editor of Quality Value’s new Economics section, a space dedicated to macroeconomic and structural analysis that will complement our investment theses and thematic reports, allowing our readers to integrate the macro context into their capital allocation process.

Without further ado, we present this first article, whose objective is to identify the economic mechanisms that will likely operate during this transition toward the new technological paradigm, as well as the theoretical errors that can distort its interpretation—without claiming to anticipate either the timeline or the specific form in which these processes will materialize.

Intelligence Crisis or Explosion of Opportunities?

An article by CitriniResearch has generated considerable commotion on social media in recent days, and it is not difficult to understand why. The scenario it describes—written as if it were a macro memo from June 2028—is, to say the least, unsettling.

Its central thesis can be summarized as follows: AI is generating unprecedented productivity growth, but that growth is structurally incapable of circulating through the real economy because it destroys precisely the base of labor income upon which the entire architecture of American consumption, credit, and taxation rests. Machines produce, but they do not spend. Displaced workers spend less. Companies reinvest their savings in more AI, which displaces more workers. The dynamic feeds back on itself without natural restraint, and its acceleration threatens to successively fracture the private credit market, the mortgage market, and ultimately the solvency of the federal government itself. What Citrini calls “Ghost GDP”—output that appears in the national accounts but never reaches anyone’s pockets—would be the central pathology of this new cognitive capitalism.

It is an elaborated argument, well-documented, and in several of its descriptive passages, probably correct.

The problem is that it confuses the transition with the destination.

Everything Citrini describes—the pain of labor displacement, financial stress, the inadequacy of inherited contracts, the slowness of institutional response—are recognizable features of an economy undergoing deep restructuring between two productive regimes. What Citrini does not demonstrate, though he assumes it on every page, is that these transitory features constitute the steady state of the new regime.

And that confusion, between the cost of adjustment and the nature of the equilibrium reached, rests on one of the oldest and most persistent errors in the history of economic thought.

The Radical Uncertainty of the Genuinely New

Before entering the substantive critique, it is worth pausing on a point that Citrini himself acknowledges in passing but whose implications he does not draw: making forecasts about the economic impact of AI is extraordinarily difficult. Not with the usual difficulty of any technological forecast, but with a qualitatively different difficulty. And the reason is exactly what Citrini himself identifies as the foundation of his thesis: this time is different.

If AI is, as Citrini and many others argue, the first technology in history that makes capital and labor substitutive factors—the first globally substitutive innovation for human work, not in a specific task but in a growing range of cognitive capabilities—then we are facing a genuinely unprecedented phenomenon.

And when we face something genuinely unprecedented, predictive capacity falls to a minimum, because there is no past experience upon which to build reliable extrapolations. Precisely because this time is different, no one can map from within the transition the regime that will emerge from it. But the uncertainty is not only quantitative (we do not know how much, or at what speed).

It is existential in a literal sense that no other technology has posed.

The range of possible outcomes from AI does not go from “we grow a little more” to “we grow a little less.” It goes from material prosperity unprecedented in human history to the literal extinction of the species. The steam engine did not pose existential risks. Neither did electricity. Even nuclear energy, which did pose them, was a technology of specific application whose risk could be geographically and politically bounded. AI is a general-purpose intelligence that improves recursively and whose alignment with human ends remains an open problem among the world’s best specialists.

The Financial Times published a chart months ago that illustrates this duality clearly: two extreme scenarios—one of technological stagnation and another of uncontrolled superintelligence—flank a central scenario of gradual and relatively orderly integration. The actual range of possibilities is incomparably wider than that of any previous innovation.

And here appears a revealing irony in Citrini’s article. His scenario implicitly assumes that humanity solves the hard problem: the alignment problem. In his 2028 memo, AI continues improving, continues being extraordinarily capable, and at no point does it misalign from human ends or generate an existential catastrophe. We survive superintelligence. What we do not survive, according to him, is the adjustment of the mortgage market. Humanity solves the unprecedented challenge of controlling a recursively improvable general intelligence and drowns in a problem that, by comparison, is institutional and a matter of design: how to redistribute income when the channel through which it flows changes.

It is epistemologically reckless to construct a scenario with tickers, dates, and precise causal chains from within a phenomenon whose range of outcomes includes human extinction. None of this invalidates Citrini’s exercise as a scenario. What it invalidates is its implicit claim to be a probable description. What we can do—and is what this article will attempt—is identify the economic mechanisms that will likely operate in the transition (competition, cheapening, democratization of access to capital) and the theoretical errors that prevent Citrini from seeing them, without pretending that we know when, at what speed, or in what concrete form they will materialize.

The Pain of the Transition Is Real

Before explaining why Citrini’s underlying diagnosis is erroneous, it is worth making clear what is correct about it, because the concession is not rhetorical but substantive.

What AI threatens to destroy—if it develops as much as Citrini and many others postulate—is not human capital in the abstract but specific human capital: the expertise accumulated in concrete tasks that would become obsolete.

The product manager expert in Salesforce, the financial analyst who masters standard valuation models, the junior developer who translates specifications into routine code, the insurance agent who monetizes client inertia, the consultant whose added value consisted of navigating complexities that a machine no longer finds tedious. If AI reaches the capabilities that Citrini describes, the decapitalization of these cohorts could be effective and irreversible within the horizon of their working lives. This should not be minimized.

Citrini narrates concrete stories—the Salesforce product manager who goes from $180,000 annually to driving an Uber for $45,000—and those stories, if they materialize, would be the archetype of what happens when a technological revolution decapitalizes the specific know-how of a generation. The artisans of the Industrial Revolution were not wrong to perceive that their world was crumbling, even though humanity as a whole came out ahead. The human cost of the transition is not a minor side effect of progress; it is its inevitable shadow, and any analysis that ignores it deserves the reproach of frivolity. Citrini’s does not ignore it. Neither does mine.

But recognizing the pain of the transition is not the same as accepting Citrini’s diagnosis about its deep causes, because that diagnosis extrapolates the symptoms of adjustment and presents them as if they were the description of a new permanent regime. And that is where the analysis goes astray.

This Time Is Different—But Not for the Reason Citrini Believes

There is a legitimate objection to all of the above that Citrini himself formulates and that is worth taking seriously: this time is different. In all previous technological revolutions, capital and labor were complementary factors. The steam engine needed operators. Electricity needed engineers. The computer needed programmers. The assembly line needed, at another level, designers, managers, supervisors.

Each innovation increased the productivity of human labor and, with it, its remuneration. ATMs made bank branches cheaper, so banks opened more of them and hired more employees for higher-value-added tasks. The internet destroyed travel agencies but created entire industries—e-commerce, social networks, the platform economy—that absorbed far more employment than was destroyed. The pattern repeated with such regularity that it acquired the force of natural law: technology destroys jobs and then creates more.

Citrini points out, rightly, that this pattern rested on a premise that AI may be breaking. Each new job created by technology required a human to perform it, because technology was only capable of executing specific and bounded tasks: capital complemented labor.

AI, for the first time, is a general-purpose technology that improves precisely in the tasks to which displaced workers would redirect themselves. It is not a machine that automates one task and frees the human for another; it is a general intelligence that competes with humans in a growing range of cognitive tasks and that improves in those tasks faster than humans can retrain. The relationship between capital and labor may be ceasing to be complementary and becoming substitutive. That is genuinely new, genuinely disruptive, and minimizing it would be an error as grave as Citrini’s catastrophism but in the opposite direction.

Now: that capital and labor become substitutive does not imply what Citrini concludes. And here is the decisive turn that his analysis does not make.

Capital is not an autonomous entity that operates by itself.

Capital is owned by someone.

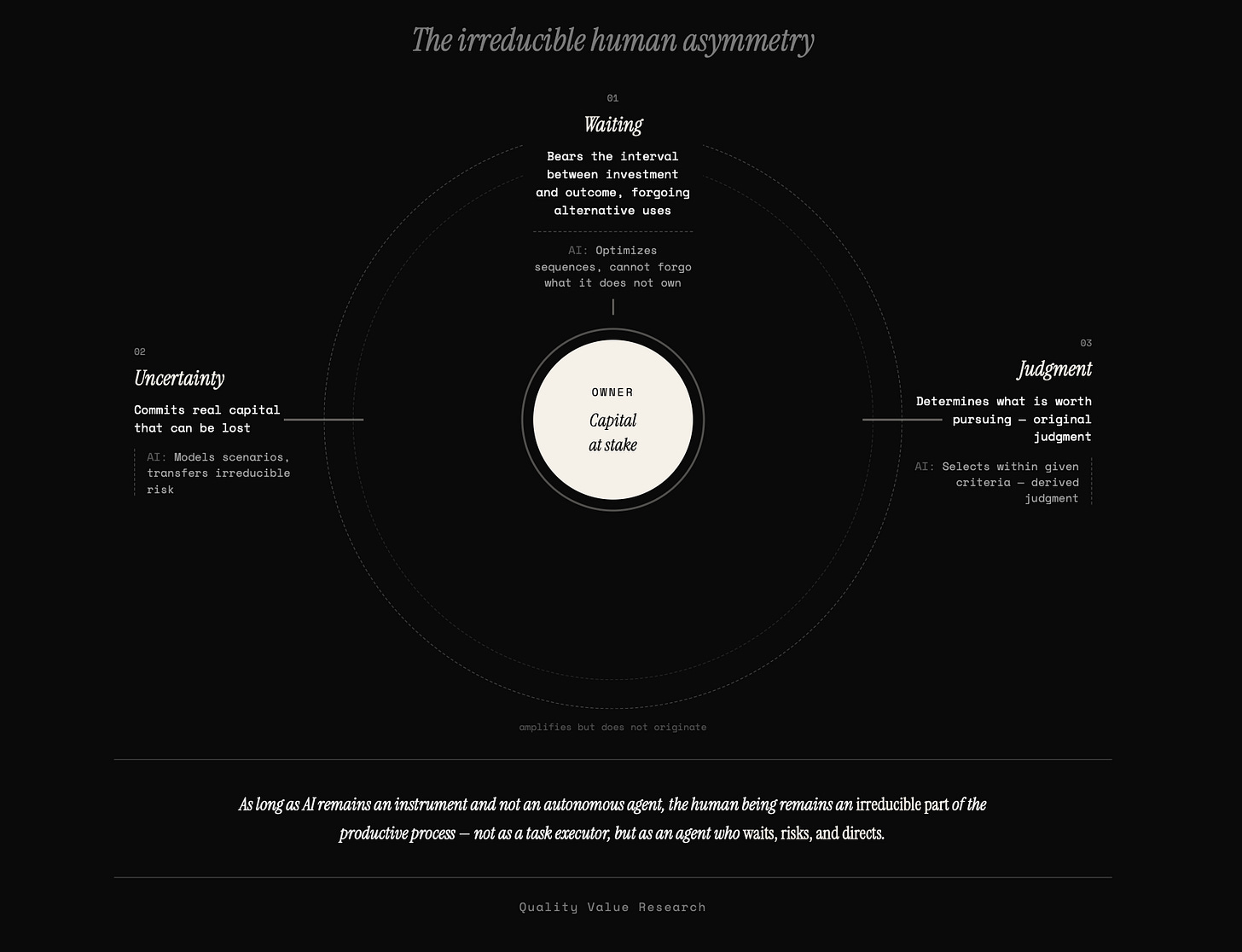

And being an owner of capital is not a passive relationship of mere legal title: it is the continuous exercise of three functions that can be partially delegated to the machine but never entirely as long as a property relationship exists between a human subject and a productive object.

The first is waiting: every productive process requires that someone financially sustain the interval between the initial investment and the final result, renouncing the alternative uses of those resources during that period. AI can optimize the sequence of a project, but it cannot be the one who renounces the alternative uses of a capital it does not possess. The waiting falls on whoever owns the resources, and that someone is, in a human economy, a human being.

The second is the absorption of uncertainty: someone must commit their patrimony to a project whose outcome is not guaranteed, and only someone who has something to lose can genuinely absorb that uncertainty. AI can refine predictions, model scenarios, and reduce residual uncertainty, but it cannot go bankrupt; it transfers the irreducible uncertainty entirely to whoever owns it.

The third is entrepreneurial judgment: the selection, among alternative courses of action, of the one the agent considers most valuable, assuming the consequences of that choice. Here it is worth distinguishing between original judgment and derived judgment. AI can exercise derived judgment: it can select among options within a framework of criteria that someone has defined for it, and it will do so with increasing efficacy. What it cannot exercise—as long as it is an instrument in the service of a human being and not an autonomous agent—is original judgment: the ultimate determination of what is worth attempting versus what is not, the decision to commit or withdraw when information is radically incomplete and the consequences fall on a real patrimony. The relationship between both types of judgment is not necessarily sequential—it is not that first the human sets the ends and then the AI operates within them. It may happen that the AI generates multiple derived proposals, explores alternatives that the human would not have conceived, and presents them as options. But the final choice among those options—the a posteriori validation of which deserves the commitment of real resources—is always original judgment, because it falls on whoever assumes the consequences. And that determination is not an act of information processing—it is an act of agency that requires a subject with their own ends and a patrimony that answers for the decision. As long as AI is an instrument and not a subject, original judgment remains in human hands.

Capital, in sum, is not a thing. Capital is waiting, risk, and entrepreneurial judgment materialized in means of production.

If capital continues to be human property—and in a human economy, where AI operates as an instrument and not as an autonomous agent, it is by definition—then the human being continues to supply the productive factors that AI cannot completely substitute.

The capital-labor relationship breaks; the capital-human owner relationship does not.

And as long as that relationship persists, the human being remains an irreducible part of the productive process—not as an executor of tasks (there AI wins) but as the agent who waits, risks, and directs. One could imagine a scenario in which AI became an autonomous agent: with its own ends, its own patrimony, and the capacity to assume consequences on its own account. In that case, the capital-human owner relationship would indeed break—but we would no longer be describing a human economy but something qualitatively different that would require its own analysis. Citrini does not pose that scenario (in fact, as we have seen, he implicitly excludes it by assuming that the alignment problem is solved), and neither do we need it for this argument.

The Myth of Ghost GDP: Through Which Channel Income Actually Flows

The argument of “Ghost GDP”—output that appears in the national accounts but never reaches anyone’s pockets—is the contemporary version of underconsumption theory, which holds that an economy can generate more output than it is capable of absorbing in the form of effective demand.

The formulation is intuitively attractive—machines do not buy protein or pay mortgages—but it incurs the same error that Jean-Baptiste Say identified more than two hundred years ago and that Austrian economists later developed with greater precision: production does not destroy demand; it creates it.

The principle deserves explanation, because it is counterintuitive and because misunderstanding it is what leads to conclusions like Citrini’s.

Say’s idea is not that “everything that is produced is sold” (that is a caricature that not even Say himself held). It is something deeper: in a monetary economy, no one produces to accumulate goods without end; one produces to exchange, and the very act of producing generates the income with which to demand what others produce.

When a farmer harvests wheat, his harvest is not only supply: it is also his means to demand the shoemaker’s shoes, who in turn will use the income received to demand the carpenter’s wood. Each person’s production is the source of their demand. The aggregate income of the economy cannot be less than aggregate output, because they are the same thing viewed from opposite sides of each transaction.

What happens when AI produces what a worker used to produce?

Production continues to exist—and with it the income it generates. Only that income no longer reaches the worker in the form of a salary; it reaches the owner of the AI in the form of profit, which they spend, invest, or save (and saving is investment in the banking system: it is lent to whoever does spend or invest it). The output has not disappeared; the distribution channel has changed. Citrini observes that the old channel (the payroll) dries up and concludes that demand evaporates.

But demand does not evaporate: it flows through another channel.

A concrete example.

Suppose a software company replaces ten analysts with an AI system. The ten analysts stop receiving their salaries—that is the pain Citrini describes, and it is real. But the company has not destroyed the output those analysts generated; it has replicated it at lower cost. The difference between the previous cost (the salaries) and the new cost (the AI subscription) is savings that becomes one of three things: profit distributed to shareholders (who spend or invest it), reinvestment in new projects (which generates new economic activity), or price reductions for the customer (which frees up disposable income for other purposes). In none of the three cases does income disappear. It is redistributed.

Citrini could respond that this reasoning ignores scale: if the substitution does not affect one company but millions, the volume of income that changes channel can be so large and so fast that the system does not absorb the redistribution in time. It is a serious objection, and it deserves to be taken seriously. Let us grant, then, the substitution dynamic in all its extension. Whenever the productivity-to-cost ratio of an AI agent is superior to that of a human worker in a given task, the company will have incentives to capture capital and replace. That process does not have to stop, and probably will not. Citrini is right about the mechanism. Where he is wrong is about the destination of the savings.

His narrative assumes that the surplus generated by substitution remains trapped within the companies that execute it, feeding a closed circuit of reinvestment in more AI that generates more substitution.

This is the “Ghost GDP.” The image is powerful, but it omits the most basic and most potent mechanism of the market economy: competition.

When a company substitutes labor for AI and obtains an extraordinary margin, that margin is a profit signal that attracts competitors—both rivals who execute the same substitution and new entrants who, precisely because AI is cheap, can replicate the operation with minimal entry costs.

Citrini himself documents this process with a precision that contradicts his own thesis: he describes how dozens of “vibe-coded” competitors fragmented DoorDash in weeks, how differentiation in SaaS collapsed because AI equalized development capabilities, how incumbents entered a “knife-fight” on prices with challengers that had no inherited cost structure. What he is describing, without recognizing it, is competition eroding the margin from substitution and transferring it downward, to the price the consumer pays.

The surplus generated by AI will tend not to stay in the company. It will circulate, but not through the channel Citrini seeks (the payroll), but through another that he systematically ignores: price reduction.

Each round of competitive substitution tends to reduce the price of the affected good or service. When SaaS that cost $500 annually drops to $50—or zero—those $450 have not disappeared from the economy: they are consumer surplus that is free to be spent on something else, or investor surplus that is free to be directed to another project. Multiplied by millions of transactions, in hundreds of sectors simultaneously, the likely effect is a massive expansion of real purchasing power that does not appear as wage income on any payroll but operates exactly as disposable income for whoever receives it.

Citrini’s GDP is not ghostly: it circulates through a channel he is not looking at because he keeps searching for income in the payroll receipt.

Citrini also needs to exaggerate the concentration of spending for the chain of contagion from white-collar employment to consumption collapse to be as dramatic as possible. And his use of data reflects this. He claims that the richest 10% generate more than 50% of all consumer spending in the United States, and that the top 20% generate 65%. The figure comes from a Moody’s Analytics analysis that has been questioned by economists such as Antoine Levy of UC Berkeley, who notes that the methodology imputes consumption from Federal Reserve data that do not measure consumption directly; data from the Bureau of Economic Analysis, which does measure it, places the figure closer to 35%.

The difference is significant, but what is revealing is not so much the exact number as the selection: among available estimates, Citrini systematically chooses the one that produces the most alarming scenario.

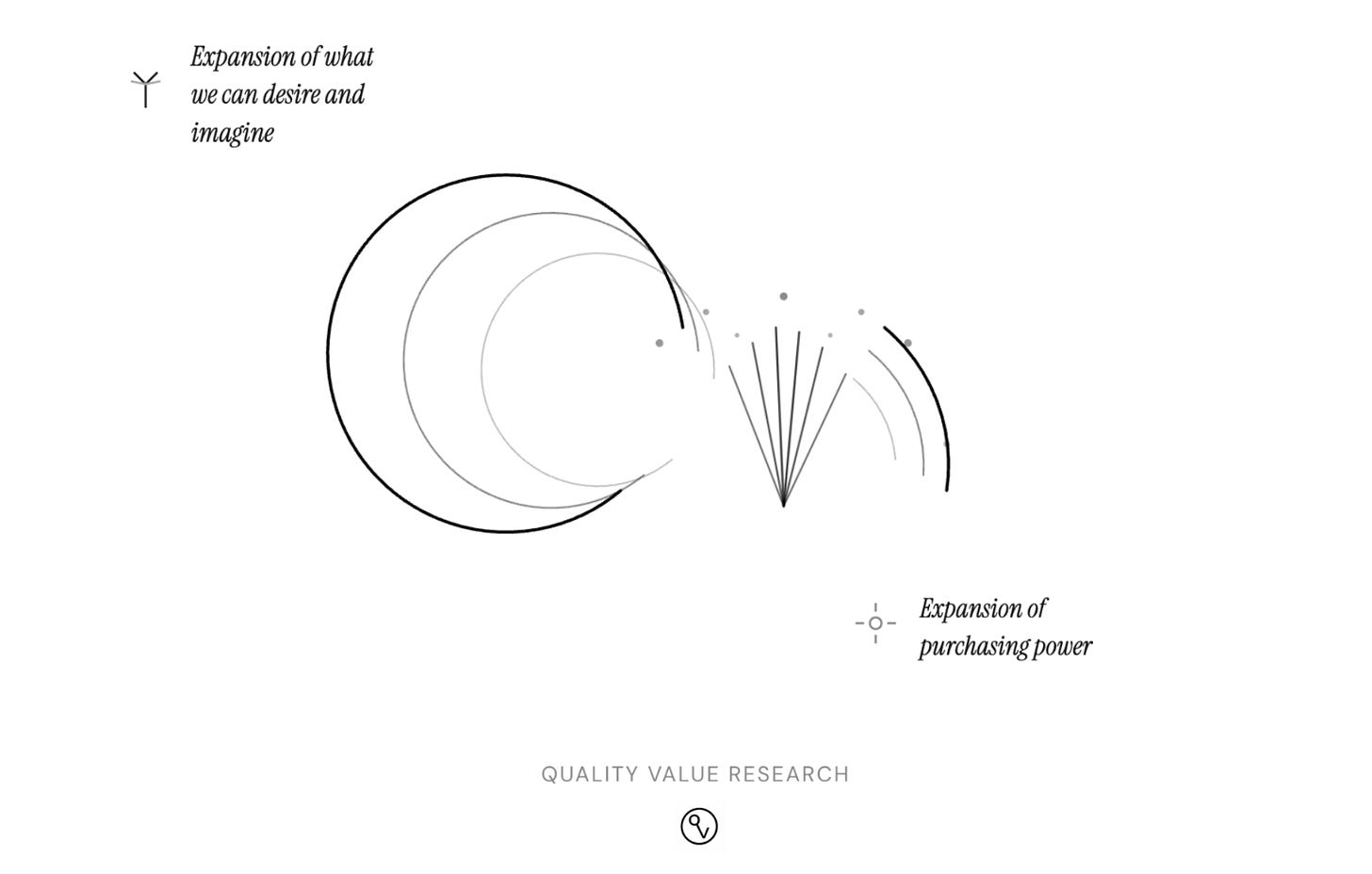

And underconsumption theory has an even deeper flaw: it implicitly assumes that the horizon of human needs is fixed, that once existing needs are satisfied the surplus finds no productive destination.

Economic history systematically disproves that premise. Human needs are not a static set that production merely satisfies; they are, in part, an endogenous product of the productive process itself. Each qualitative expansion of productive possibilities reconfigures the horizon of ends that human beings are capable of imagining and desiring. The printing press did not only cheapen existing books: it engendered the modern novel, the press, scientific popularization—cultural forms that did not exist before and that generated entirely new needs. Electricity did not only illuminate what already existed: it made conceivable what without it was unthinkable.

AI, which by hypothesis represents the greatest qualitative expansion of productive possibilities in human history, should not contract the space of unsatisfied needs but expand it radically.

Citrini’s “Ghost GDP” is an illusion produced by looking only at the jobs that disappear and not at the ends that have not yet been conceived.

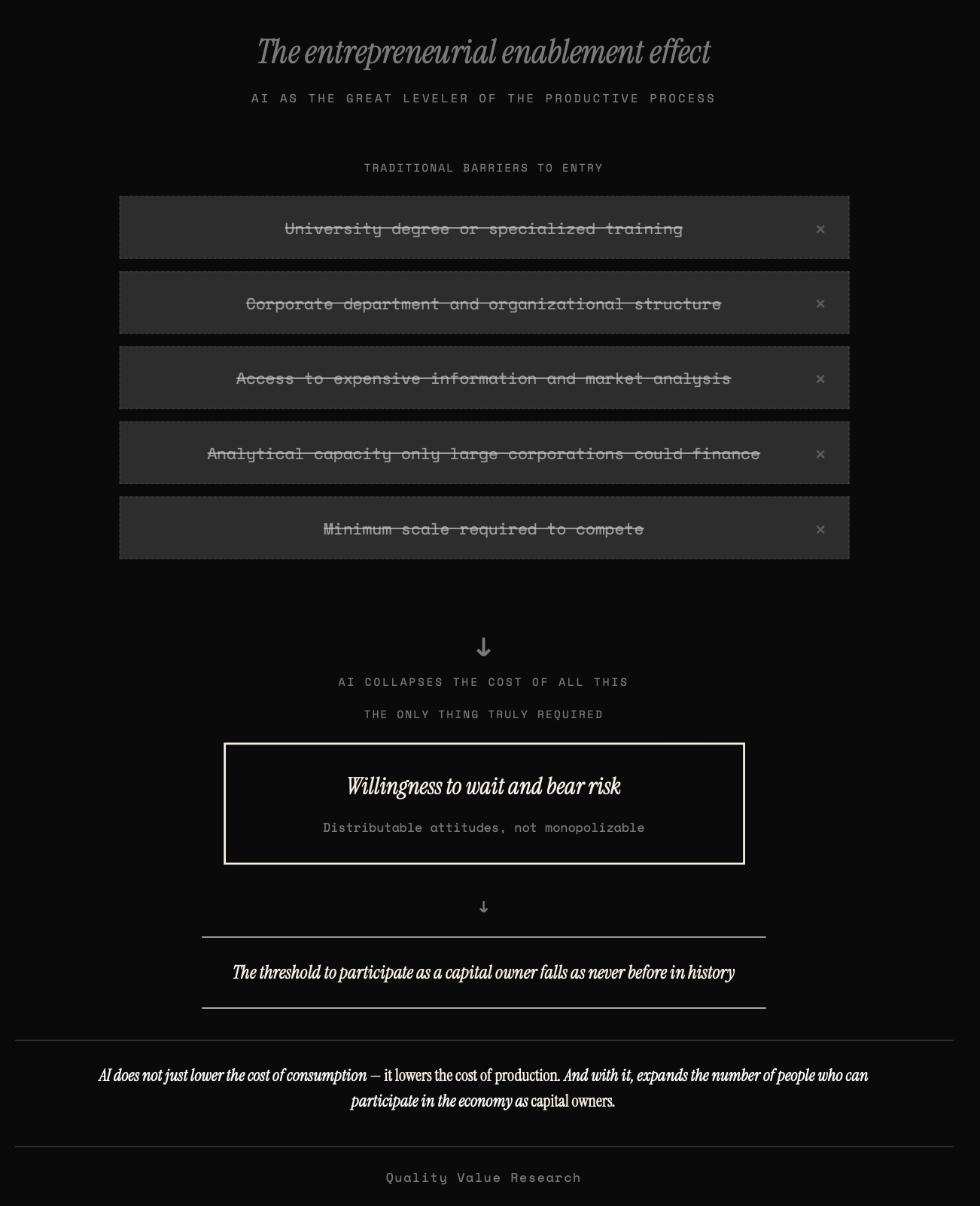

AI as the Great Leveler: The Transition from Worker to Capitalist

Price reduction has a second effect, perhaps more important than the first, and which connects directly with the question of whether the substitution dynamic is equivalent to a collapse of aggregate demand.

Each price reduction in the inputs that a productive activity requires lowers the threshold of capital necessary to undertake it—and the most drastic reduction occurs precisely in the input that has historically constituted the highest barrier to entry: human capital, the intellectual and formational component of any activity.

Consider what this means concretely. The renovation contractor who previously needed $200,000 to set up a competitive operation—between management software, design, accounting, permit analysis, and construction planning—now needs a fraction of that, because AI has collapsed the cost of the entire intellectual component of their business. The independent financial advisor who previously required a Bloomberg terminal, a team of analysts, and an institutional software license can now offer portfolio analysis of comparable quality for a few dollars a month. The small hospitality business owner who previously outsourced accounting, marketing, and supplier management to three different companies now integrates all of that in a tool that costs less than a dinner.

In each case, the transition is the same: from needing a department to compete to being able to do so with one’s own judgment and a subscription.

And the effect does not stop at directly intellectual costs, because practically every input in a modern economy incorporates an intellectual component in its production chain—design, logistics, inventory management, process optimization, regulatory certification—so that the cheapening of intelligence propagates indirectly to the cost of inputs that are not intellectual in themselves. Construction materials are not manufactured by an AI, but their price reflects the cost of the entire chain that designs, specifies, transports, and certifies them, and that chain tends to cheapen at each link that AI touches. The cascade effect reduces the threshold of entry to business activity in ways that far exceed mere office work substitution.

AI, in this sense, operates as the great leveler of business activity.

It not only cheapens consumer goods but—and this is decisive—cheapens the costs of entry to the productive process itself, especially those that depended on specialized training, access to expensive information, or analytical capacity that only large corporations could finance. That is not destruction of productive capacity; it is its democratization on an unprecedented scale.

And here closes the argument that runs through this entire reply.

If capital and labor become substitutive, the relevant question is not whether displaced workers will find new jobs as workers (perhaps many will not), but whether the threshold for participating in the economy as owners of capital—as agents who wait, risk, and judge through AI—falls enough to absorb a significant proportion of those who lose their position as workers.

And the answer, in an economy where intelligence as an input becomes universal and where the cascade effect cheapens everything that intelligence touches, is that this threshold can fall as never before in history.

One does not need a university degree or a corporate department to commit one’s own resources to an uncertain project, sustain the wait for its maturation, and select among the courses of action that AI makes available.

What is needed is a willingness to absorb waiting and risk—attitudes that are distributable, not monopolizable—and AI, by collapsing the cost of everything else, makes those attitudes the only thing truly necessary.

This is what we might call the entrepreneurial enablement effect of AI: it not only cheapens consumption but cheapens production—and with it expands the number of people who can participate in the economy as owners of capital.

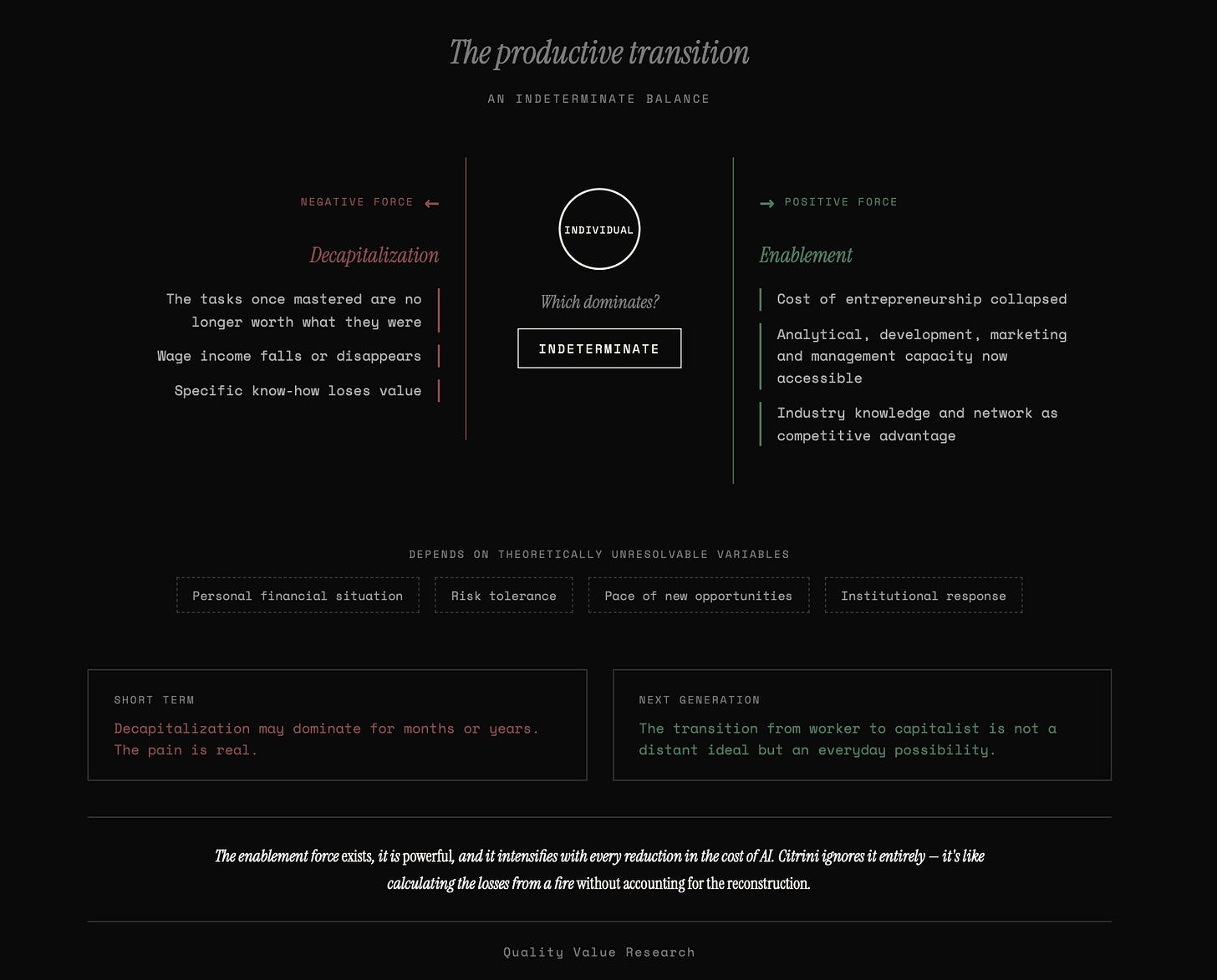

The Productive Transition: An Indeterminate Balance

This enablement effect is real, but its effect on the specific cohorts suffering the disruption is not predetermined.

The Salesforce product manager who loses their $180,000 job operates simultaneously under two opposing forces. On one hand, they suffer the decapitalization of their specific know-how: the tasks they mastered are no longer worth what they were worth, and their wage income falls or disappears. On the other hand, they live in an economy where the costs of entrepreneurship have plummeted, where AI tools provide them with analytical, development, marketing, and management capacity that five years ago would have required a team of ten people, and where their knowledge of the sector and their network of contacts give them a starting point that not everyone has.

Which of the two forces dominates? We do not know.

It is genuinely indeterminate, and depends on variables that cannot be resolved theoretically: their personal financial situation, their willingness to take risk, the speed with which the economy generates new business opportunities, the quality of the institutional response.

What we can affirm is that the indeterminacy resolves differently depending on the time horizon. For those in the midst of disruption, with a mortgage to pay and savings running out, the force of decapitalization may dominate the force of enablement for months or years. The pain is real, and there is no theoretical argument that alleviates it.

But for the generation that comes after—the one that grows up in an environment where the tools that previously required entire departments are within anyone’s reach—the transition from worker to capitalist is not a distant ideal but an everyday possibility. Just as today anyone with a smartphone can publish content that thirty years ago required a printing press or a broadcast station, tomorrow anyone with AI could undertake projects that today require corporate structures. The threshold drops, and with it the barrier that separated those who sold their labor from those who produced through their capital.

The net balance of these two forces—decapitalization of specific work and enablement of entrepreneurial capital—will determine the real experience of the transition for each individual, each cohort, and each region. It is not a balance that can be resolved a priori either in Citrini’s catastrophist direction or in the optimist one.

What can be affirmed is that the enablement force exists, that it is powerful, that it intensifies with each reduction in the cost of AI, and that Citrini ignores it completely. His analysis only counts decapitalization and extrapolates from there to systemic collapse. It is like calculating the losses from a fire without accounting for the reconstruction.

The Financial Transition

The most elaborated section of Citrini’s article—and the most effective as a narrative piece—is the chain of financial contagion: labor substitution deteriorates wage incomes, which threatens the servicing of mortgage debt and the debt of companies whose business models AI has made obsolete, which fragilizes banks and life insurers that finance private credit, which tightens credit conditions, which further depresses consumption, which accelerates the spiral.

The narrative is cinematic: Zendesk as the smoking gun, Athene as the systemic link, the prime mortgages of San Francisco as the last domino. It must be acknowledged that, as a scenario exercise, it is intelligent.

And it should not be dismissed with an optimism that the argument does not justify. If we accept that the productive transition can be disorderly—and we do accept this, because the history of every technological revolution confirms it—the financial transition will probably be disorderly as well, and possibly more violently so.

The financial system is nothing other than the contractual crystallization of expectations about future income flows. A thirty-year mortgage signed in 2024 incorporates the expectation that the debtor will maintain stable wage income for three decades. A leveraged loan against a SaaS company’s ARR incorporates the expectation that that recurring revenue will keep recurring. The debt of any company whose business model rests on the intermediation of human frictions—and Citrini documents many—incorporates the expectation that those frictions will persist.

When AI invalidates those expectations, contracts come under stress.

And credit contracts are, by design, rigid: they are negotiated for terms of decades, with fixed terms, and are not restructured without a credit event. Which is exactly what Citrini describes.

It is also true that the evolution of system collaterals will be ambiguous. There are reasons to think that assets complementary to AI and relatively scarce—well-located urban land, critical physical infrastructure, certain real estate—would tend to appreciate in real terms as everything replicable becomes cheaper.

But simultaneously there would be assets that completely lose their productive function: offices designed for workforces that no longer exist, commercial infrastructure linked to intermediation models that AI has dismantled, specialized facilities for processes that cease to be necessary. The net effect on collaterals is not unambiguously positive or negative; it depends on the composition of each balance sheet, each portfolio, each geographical zone.

That there will be significant credit disruptions, defaults in specific segments of private credit, bankruptcies of companies whose business models AI has made obsolete and whose debt was signed under income assumptions that no longer exist, deterioration in mortgages linked to the cohorts most affected by substitution, and even episodes of systemic stress requiring extraordinary interventions by the Federal Reserve and regulators—all of this is not only possible but probable if the speed of the productive transition approaches what Citrini describes.

What does not follow is that these financial disruptions constitute evidence of a permanent structural collapse of aggregate demand.

They are evidence that the financial contracts inherited from the previous productive regime need repricing—both those that bet on the continuity of stable wage incomes and those that bet on the continuity of business models that AI is dismantling. A repricing that will be painful, disorderly, and costly for those on the wrong side, but that is, ultimately, the mechanism by which the financial system adjusts to a new productive reality.

The financial transition would be, in sum, a reflection of the productive transition: foreseeably as disorderly as this one and as costly as this one.

But they are effects of restructuring between two regimes, not the steady state of the new regime. The defaults and bankruptcies that Citrini describes are not symptoms of an economy that has stopped working; they are the cost of a financial system that is recalibrating from the assumptions of the regime that is dying toward those of the regime that is emerging. Confusing that cost with the economy’s permanent destination is, once again, the underconsumptionist error: taking a snapshot of the transitory mismatch and projecting it as if it were the final equilibrium.

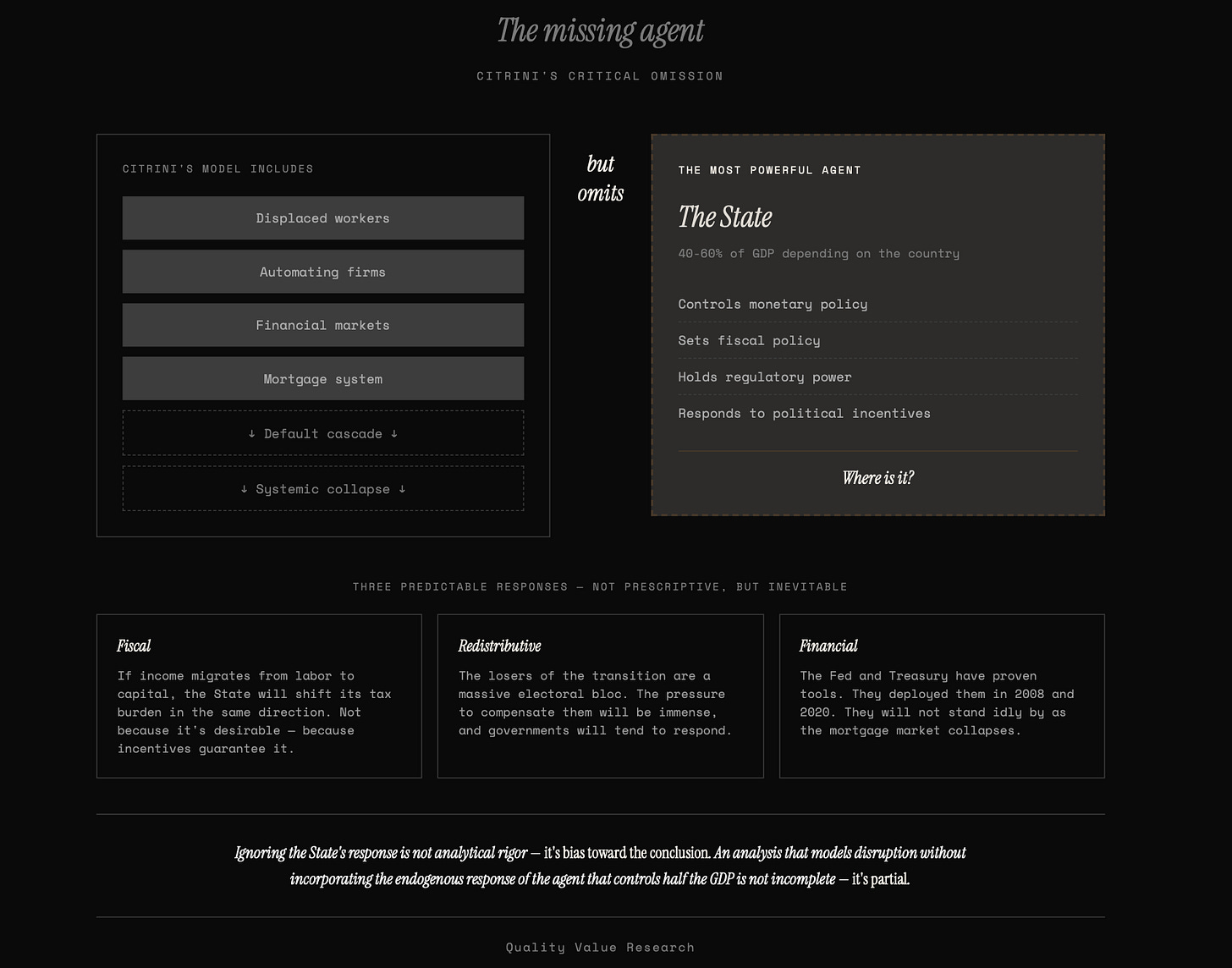

The State’s Reaction: Another Omission by Citrini

Citrini purports to write a realistic report about what happens when AI disrupts the American economy.

But his analysis omits the reaction of the largest and most powerful agent in that economy: the State.

And the omission is not minor, because the state’s response is not an exogenous variable that can be included or not at the analyst’s discretion; it is an endogenous consequence of the same incentives that Citrini describes. When millions of voters lose their jobs and the financial system comes under stress, governments do not stand by watching.

They act—not necessarily well, not necessarily in time, but they act. Ignoring that reaction is not analytical rigor; it is partiality.

What follows is not a prescription of what the State should do, but a description of what it will foreseeably do, driven by the political incentives that the disruption itself generates.

Consider the three most foreseeable dimensions of that response.

The first is fiscal. Citrini presents the erosion of the tax base—federal revenues fall because they tax payrolls and payrolls dry up—as if it were a dead end. But states are not passive collectors who watch their tax base disappear without reacting. If income migrates from labor to capital—if the surplus from AI productivity flows to infrastructure owners, to shareholders of companies that adopt it, to corporate profits and capital gains—the State will tend to shift its fiscal pressure in the same direction. Not because it is desirable (there are solid arguments against capital taxation), but because political incentives guarantee it: a State that needs to collect and sees where the income is will tax that income. The fiscal history of the last two centuries is a succession of adaptations of the tax base to the productive structure of each era. There is no reason to suppose that this time will be different. The State’s fiscal balance does not have to deteriorate as Citrini describes; it will tend to reconfigure, with the distortions and costs that every fiscal reconfiguration entails, but it will tend to reconfigure.

The second is redistributive. The losers of the transition—the cohorts whose specific human capital is decapitalized—constitute an enormous electoral bloc, concentrated in the upper-middle income segments that participate most in the political process. The pressure to compensate them through direct transfers, retraining programs, employment subsidies, or guaranteed income mechanisms will foreseeably be immense, and governments—by conviction, by electoral calculation, or by simple fear of social disorder—will tend to respond to that pressure. Citrini himself implicitly acknowledges this when he describes proposals like the “Transition Economy Act” or the “Shared AI Prosperity Act” in his scenario; what he does not do is incorporate the effect of those interventions into his causal chain. He mentions them as part of the political landscape and dismisses them as insufficient or late, without modeling their dampening impact on the consumption dynamic he describes as catastrophic. That compensation will be imperfect, late, and politically conflictive is likely. That it will be nonexistent is implausible.

The third is financial. The implosion of private credit and the mortgage market that Citrini describes in such detail would occur—if it occurs—before a Federal Reserve and a Treasury that have proven tools to intervene in exactly this type of crisis. The purchase of MBS, the provision of emergency liquidity, the facilitation of forbearance and refinancing programs, the explicit or implicit backing of systemic institutions—all of that was deployed in 2008 and in 2020 with results that, whatever their long-term costs, avoided the cascade of defaults that Citrini takes as inevitable. Will this type of intervention generate distortions, moral hazard, and future costs? Without a doubt. But the analyst who claims to describe what happens cannot construct their scenario assuming that the central bank will passively observe the collapse of the mortgage market without moving a finger. That is not what it has ever done. That is not what it will foreseeably do this time.

None of these observations is prescriptive. I am not arguing that state intervention is desirable, nor that its effects will be benign, nor that it will not generate its own distortions and problems.

I am arguing something simpler: that it is predictable. And that an analysis purporting to model the macroeconomic consequences of AI disruption without incorporating the endogenous reaction of the agent that spends between 40% and 60% of GDP depending on the country, that controls monetary policy, that has regulation in its hands, and that responds directly to the political incentives generated by the disruption itself is not an incomplete analysis—it is an analysis biased in the direction of its conclusion.

Conclusion

Citrini ends his article with a warning: “the canary is still alive.”

He says it as a call to act before it is too late. I share the urgency, but not the diagnosis that motivates it.

The canary to watch is not the one Citrini describes. His canary—the collapse of aggregate demand through labor substitution—presupposes that the economy only knows how to generate demand through one channel (the payroll), that human beings only know how to participate in production in one form (as workers), and that institutions only know how to operate under one regime (the inherited one). None of those three premises survives scrutiny.

The real canary is another. It is the speed of the transition versus the adaptation capacity of the specific people who suffer it: how many cohorts will be trapped between a regime that is dying and another that has not yet consolidated, and for how long? It is the political response to that speed: will it be agile enough to facilitate the transit without being clumsy enough to obstruct the wealth creation process that makes it possible?

And above all, it is the question that precedes all others and that Citrini takes as resolved without even posing it: will we keep AI as a human instrument, or will we allow—through negligence, haste, or incapacity—it to end up controlling us?

If AI remains as an instrument, the economy will tend to adjust. The adjustment will be painful for some, politically conflictive, and ethically demanding, although the net balance may be positive even during the transition.

But the likely direction is toward a more productive regime, with a broader space for value creation and more democratic access to the productive process than the current one. If AI ceases to be an instrument, none of these arguments matter—neither Citrini’s nor mine.

The canary is still alive. What threatens to kill it is not that AI produces too much. It is that we confuse the transition with the destination and, believing that collapse is inevitable, have a partial and incomplete vision of the transition process that leads us to sacrifice the wealth creation process in the name of a distribution that can only be sustained if that process continues.